Cinematic Sovereignty: How China’s New Generation of AI Video Models Could Reshape U.S. Soft Power Projection

February 18, 2026

For a century, America’s most scalable influence engine was not a carrier strike group or a trade pact. It was a story. Hollywood did not just sell tickets. It normalized values, aesthetics, and aspirations. In the age of generative AI, that soft power advantage is no longer anchored to sound stages or studio distribution. It will increasingly be anchored to the default creative workflow stack.

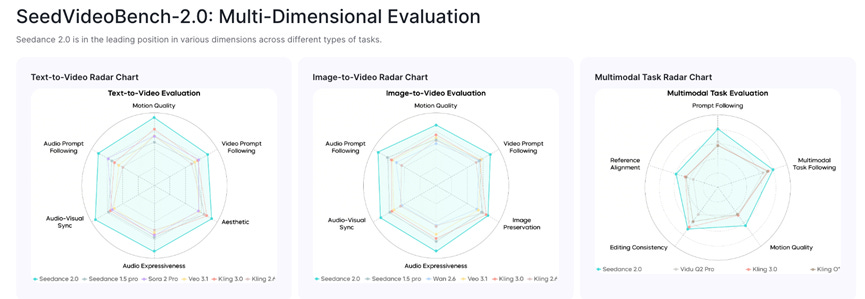

That is why ByteDance’s Seedance 2.0 and Kuaishou’s Kling 3.0 are geopolitically consequential. They are not merely better “text to video.” They are early world model engines: multimodal models that can generate coherent scenes, maintain character consistency across cuts, follow direction and increasingly pair visuals with native audio, all from a single interface. ByteDance describes Seedance 2.0 as a unified audio-video joint generation model with major gains in physical realism and controllability for industrial grade creation. Kuaishou positions Kling 3.0 as a major upgrade aimed at higher fidelity generation, stronger prompt following, and creator-first controls such as storyboard style workflows and finer motion control. These new tools are not just mere evolutionary technical improvements — they are redefining the entire creative process, and the creator community is taking notice.

Figure 1: Video benchmark comparison of Seedance 2.0 vs. other global players. (ByteDance)

The strategic implication is simple. If creators and film studios around the world gravitate toward Chinese video tools for their cinematic capabilities, cost, and ease of use, then the center of narrative gravity shifts. Hollywood’s soft power does not vanish, but it stops being the default.

Why video matters more than text for influence

Text models can argue. Video models can show. Video compresses persuasion into seconds, crosses literacy barriers, and plugs directly into the dominant consumption format of the Global South and Gen Z everywhere. We saw that clearly in the influence of TikTok and RedNote (Xiaohongshu) over the last few years. Whoever supplies the most accessible cinematic generation platform gains an advantage in shaping global narratives.

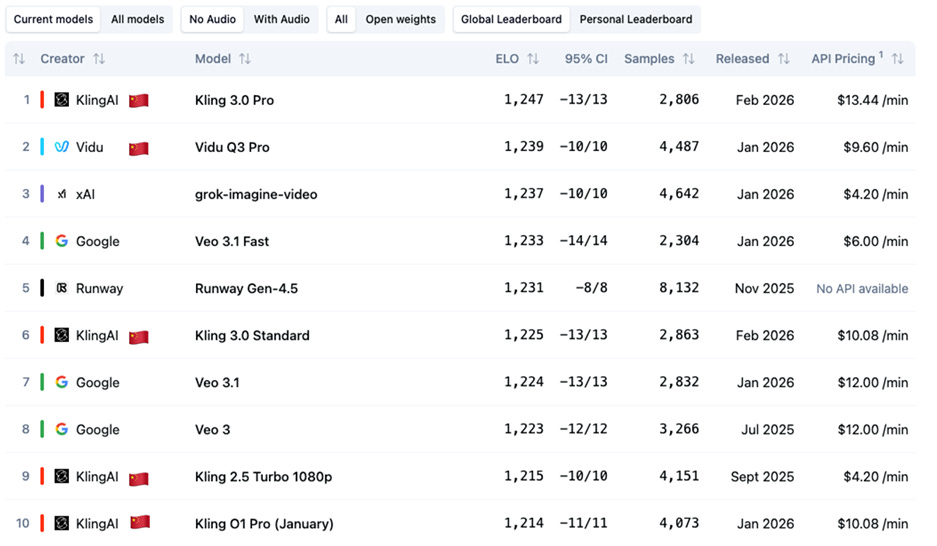

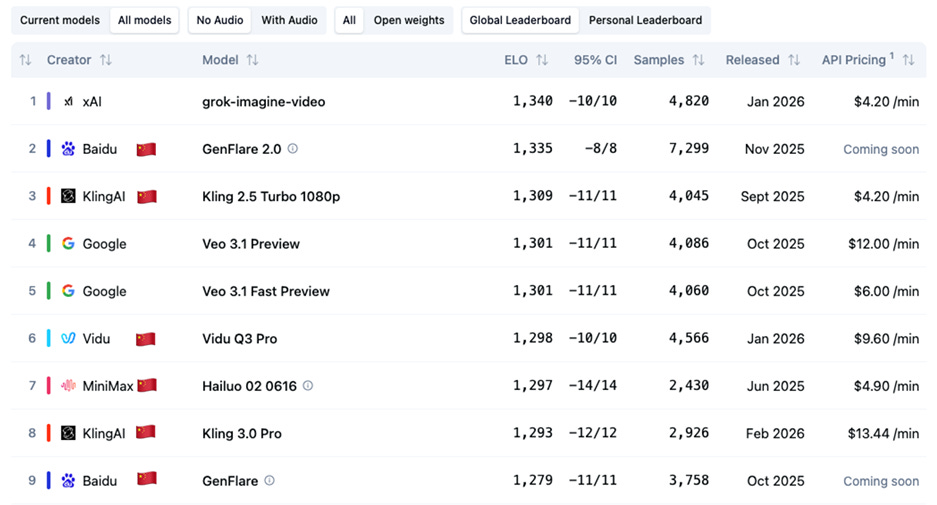

Public AI video leaderboards have demonstrated the prominence of Chinese AI video offerings over the last couple years. Artificial Analysis’ Video Arena uses large-scale blind comparisons and ELO-style scoring – a dynamic, head-to-head rating system based on relative performance — to rank video models. Chinese players have repeatedly appeared near or at the top. Seedance 2.0 is so new that it is not yet fully represented, but that is exactly the point: China’s pace of shipping is now faster than independent benchmarks can keep up, forcing the market to catch up. The early influencer sample generations using Seedance 2.0 are going viral online, and clearly a leap ahead of any other video model on the market today. It appears this tool has the potential to produce traditional million-dollar cinematic scenes in minutes, for tens of dollars.

Figure 2: Artificial Analysis’ Text-to-Video Arena Global Leaderboard: 5 of top 10 are Chinese. (Feb 15, 2026)

Figure 3: Artificial Analysis’ Image-to-Video Arena Global Leaderboard: 6 of top 9 are Chinese. (Feb 15, 2026)

Why Hollywood is disproportionately exposed

Hollywood’s moat was scalable high-quality production, access to funding, plus global distribution. Generative video collapses the first moat, makes the second irrelevant, and when combined with easy online distribution, dramatically weakens the third. Indie creators, advertisers, and small studios from anywhere around the world can now produce cinematic-quality content for a small fraction of the cost and time. Having judged three AI film festivals in 2025, I can attest to the dramatic increase in quality of AI-generated films over the last year.

As “Deadpool & Wolverine” screenwriter warned in a recent article “Hollywood is about to be revolutionized/decimated.“ In recent days, SAG-AFTRA denounced ByteDance’s new video model as a “blatant infringement” and Disney has issued a cease and desist letter to ByteDance for allowing generation of its copyrighted characters. ByteDance has responded, “We are taking steps to strengthen current safeguards as we work to prevent the unauthorised use of intellectual property and likeness by users.” Clearly Hollywood has the right to be concerned over IP misuse in this case, but it should be noted that OpenAI, Google, Meta and X.ai all allowed generation of copyrighted characters in their graphics and video models when they initially released. So it’s unclear if this lack of respect for IP is unique to ByteDance and Chinese AI firms, or a broader systematic issue across the AI industry globally in an effort to generate launch buzz.

The longer-term effect is actually more threatening for U.S. soft power: attention fragments, and the share of global cultural bandwidth occupied by U.S. narratives declines, even if Hollywood film output and quality remain strong. With billions of “creators” given access to the means for producing high-quality content globally, it’s inevitable that non-U.S. narratives will gain more traction and relevance. There will clearly be a flood of AI slop, but there are also storytelling geniuses all over the world that could now unleash their talents.

Open source is another accelerant. Seedance 2.0 and Kling 3.0 are not announced as open weights yet, but China’s broader ecosystem is clearly embracing diffusion. Alibaba open-sourced its Wan video and image models, Tencent has open-sourced its 3D/video generation model Hunyuan, as has Minimax. Not to mention most leading Chinese LLMs (DeepSeek, Moonshot AI, Z.ai, etc.) are open weights and open license. Diffusion beats perfection in platform transitions. U.S. AI vendors who offer their models only via API or apps often limit access to their tools based on IP address and location, restricting a number of countries from using the models. Creators would have less to fear from such restrictions with Chinese offerings, making global adoption easier. Open and low friction stacks win developers, plugins, workflows, and eventually become the standard of what people use.

Why China is improving fast

China’s lead is structural. First, data gravity. China sits on the world’s densest short video ecosystems. Kuaishou reported average daily active users of 409 million in Q2 2025, with users spending over two hours per day on the app. ByteDance touts well over a billion daily active users. Platforms of that scale generate enormous volumes of video, edits, audio, and preference signals that can accelerate iteration, tuning, and product fit.

Second, product feedback loops. Chinese platforms tend to ship quickly, collect engagement data, then retrain and fine-tune. In multimodal domains, distribution and preference data can matter as much as model architecture or compute capacity.

Third, strategic focus. The United States has concentrated prestige, capital, and policy attention on frontier language and coding, often justified as the path to AGI (Artificial General Intelligence) – i.e., “God models.” China has pushed aggressively into diffusion and real-world use cases, mostly via open-source/open-weight models and multimodal releases. From a geopolitical lens, diffusion can be a more durable influence strategy than betting everything on a single “AGI finish line.”

Energy and the economics of inference

Video generation is compute heavy, and compute is energy. In my recent Stanford Digitalist Paper, Beyond Rivalry, I argue that energy capacity increasingly functions as an input to AI power, and that China’s scale in generation gives it leverage in cost and availability. Lower inference costs translate into broader adoption. I recently spoke to the data center head of a leading Chinese data center operator, and he tells me a significant portion of their AI inference traffic today is coming from the United States and Middle East customers trying to leverage their lower costs enabled by cheaper energy. The United States may have a short-term lead on GPU supply, but the top U.S. labs are struggling to acquire enough energy supply to power the next generation of chips they have bought.

How much does lax IP enforcement play a factor?

Intellectual property asymmetries are real, and uncomfortable. The U.S. Trade Representative’s 2025 Special 301 Report continues to cite widespread online piracy and enforcement challenges in China. That does not establish what any specific model was trained on. However, the uneven enforcement environment in China can expand the volume of data that is practically accessible for large-scale model training, lowering the cost and friction of large-scale data accumulation. In video generation, this can accelerate model quality. At the same time, however, Google also has access to a huge corpus of video data from its YouTube platform and OpenAI has a history of misusing copyrighted content, making it unclear if IP infringement alone explains the difference in model performance, especially given the significant compute advantage of Google and other U.S. labs.

Can the United States catch up, and should Washington play a bigger role?

Technically, yes. The harder problem is institutional and economic. If U.S. video stacks remain gated, expensive, and trapped in licensing uncertainty, they will lose diffusion. Washington should treat multimodal video and world models as soft power infrastructure. That means targeted public private investment in creative AI technologies and creators themselves, open evaluation benchmarks for controllability and safety, AI content transparency policy, and creator tooling that makes U.S. models more practical and trustworthy. It also means greater clarity in the licensing layer so training and compensation can coexist without paralyzing litigation and also confronting the energy bottleneck that keeps U.S. inference expensive.

None of this requires an arms race. As I, with another CCA fellow, Paul Triolo, argued in our MIT Technology Review Op-Ed, “There can be no winners in a U.S.-China AI arms race”. The right approach is competitive resilience paired with narrow, durable cooperation on shared guardrails: i.e., provenance standards, greater IP protection, AI watermarking, and incident reporting for multimodal persuasion risks. In fact, greater cooperation on energy generation with China could make U.S. inference much more affordable, and serious discussions over IP licensing and protection could even the playing field for U.S. vendors.

As countries pursue greater technological and cultural sovereignty globally, we have to confront the reality that the ability for any one nation to direct the global narrative becomes increasingly difficult. Hollywood once projected America’s imagination and values around the world. In this new age of low-cost, high-quality generative video, imagination becomes a software supply chain. If the United States wants to preserve more of its narrative influence, it must compete where the infrastructure of persuasion is headed, while cooperating where national and global safety demands it.

Acknowledgment: The author gratefully acknowledges the anonymous CCA reviewer for thoughtful comments, as well as Jennifer Choo, CCA’s Director of Research and Strategy, and Jing Qian, Co-Founder, and Managing Director of CCA, and Vice President of Asia Society, for their substantive insights, editorial guidance, and support in the preparation of this commentary.