Misdiagnosing the U.S.–China AI Race: Recalibrating America’s Approach to an Incomplete Strategy

By Alvin W. Graylin, Honorary Senior Fellow on Technology at the Center for China Analysis

Washington’s AI strategy reflects genuine strategic concerns: that the United States is locked in a decisive race with China for artificial general intelligence (AGI), that whoever crosses the finish line first wins a permanent strategic advantage, and that maximum acceleration, paired with maximum denial of chips and technology to Beijing, is the only rational play. While the competition with China is real and the risks are genuine, key elements of this narrative deserve closer examination. And building a strategy on an incomplete understanding risks producing outcomes far worse than the threat it purports to address.

I write this not to minimize real security concerns, nor to suggest naïveté about Chinese strategic ambitions. After 35 years helping U.S. MNCs build technology businesses in China and leading AI, semiconductor and cybersecurity efforts across both ecosystems, I have seen how quickly assumptions about technological dominance can unravel. The argument here is simply this: we need to reexamine the race we think we’re running, the game we think we’re playing, and the strategy we’ve chosen. Because right now, the evidence suggests our current strategy may be incomplete or overconcentrated on a single dimension of competition.

Questioning the Decisive Strategic Advantage Thesis

The dominant narrative in Washington’s AI policy circles goes something like this: whoever achieves AGI first will gain a decisive strategic advantage (DSA), a durable, possibly permanent lead that reshapes the global balance of power. This belief drives hundreds of billions in capex commitments, aggressive export controls, and a general willingness to treat AI development as a wartime mobilization.

The DSA thesis depends on several assumptions, each of which deserves scrutiny. First, that there is a clear finish line. There is not. AI development is a continuous, multi-dimensional process with no single threshold that confers omnipotence. AGI, however defined, would not be a static superweapon but a rapidly evolving ecosystem that competitors can and will replicate. History reminds us: America’s nuclear monopoly lasted just four years.

Second, the thesis assumes that model capability gaps can be maintained through compute denial. Recent market data point in a different direction. The U.S.-China frontier model gap, once estimated at 12-14 months, has narrowed to roughly two to three months, during a period when the U.S. has steadily ramped up export controls on even mid-tier data center GPUs. On the April Monthy 2026 OpenRouter leaderboard, four of the top six most-used models globally were Chinese. MiniMax, Kimi, DeepSeek, Xiaomi and Zhipu models are all surging in popularity, driven by their cost advantages and the thirst for highly capable open-source models by AI agents. In video generation, Kuaishou’s Kling 3.0 and ByteDance’s Seedance 2.0 significantly outperform OpenAI’s Sora and Google’s Veo on independent benchmarks and real-world use cases as discussed in my recent CCA essay.

Third, the DSA narrative assumes a winner-take-all dynamic. In practice, AI models are rapidly commoditizing. Cursor usage data from 2025 shows the top model changing nearly every month, with no single lab holding sustained dominance. The recent batch of China models launched around Lunar New Year all are within a few percent of leading U.S. frontier models (at the time of release) but available at approximately 1/10th the API price. There are now even small models from Qwen which perform at about the level of frontier models from a year ago that can run natively on an iPhone. When the commodity is cheap and abundant, strategic advantage shifts from who builds the best model to who deploys AI most effectively across their economy.

And this is precisely where the current strategy is most in tension with its own goals. While Washington and Silicon Valley pours massive resources into chasing the elusive AGI finish line, Chinese companies are deploying “good enough” AI at massive scale. ByteDance’s Doubao chatbot exceeded 100 million daily active users. Alibaba’s Qwen models have surpassed 700 million downloads globally, spawning 180,000+ derivative models. Chinese open-source models are becoming the de facto platform for sovereign AI efforts across the Global South’s 150 Belt and Road Initiative partner countries. Leading open-source models are usually much smaller than closed-source frontier models, so they can be downloaded and installed on smaller on-premise systems, and the smaller models will run smoothly on personal laptops and even modern phones. This is a significant diffusion advantage by offering greater data sovereignty and privacy, while also enabling lower deployment costs domestically and globally.

Today, most Chinese models are designed to run on Nvidia chips, but the Chinese are already developing alternatives to Nvidia’s GPUs, and a number of the new Chinese models (e.g., Deepseek and Zhipu) are already being optimized for them with some already trained on them. As Chinese domestic chips mature, the long term plan is to offer full stack solutions to the international market. Denying US chips to Chinese ecosystem now will only accelerate the drive for domestic alternatives, speeding local innovation and reducing reliance on US technology.

Beyond the Arms Race Framework

The U.S. approach treats the AI competition as an arms race: zero-sum, secretive, defined by denial and containment. But what’s actually unfolding looks far more like a platform race and an innovation race, where value expands, multiple winners coexist, and the key metric is diffusion, how broadly and effectively AI is adopted across industries--not who achieves the highest benchmark score this week.

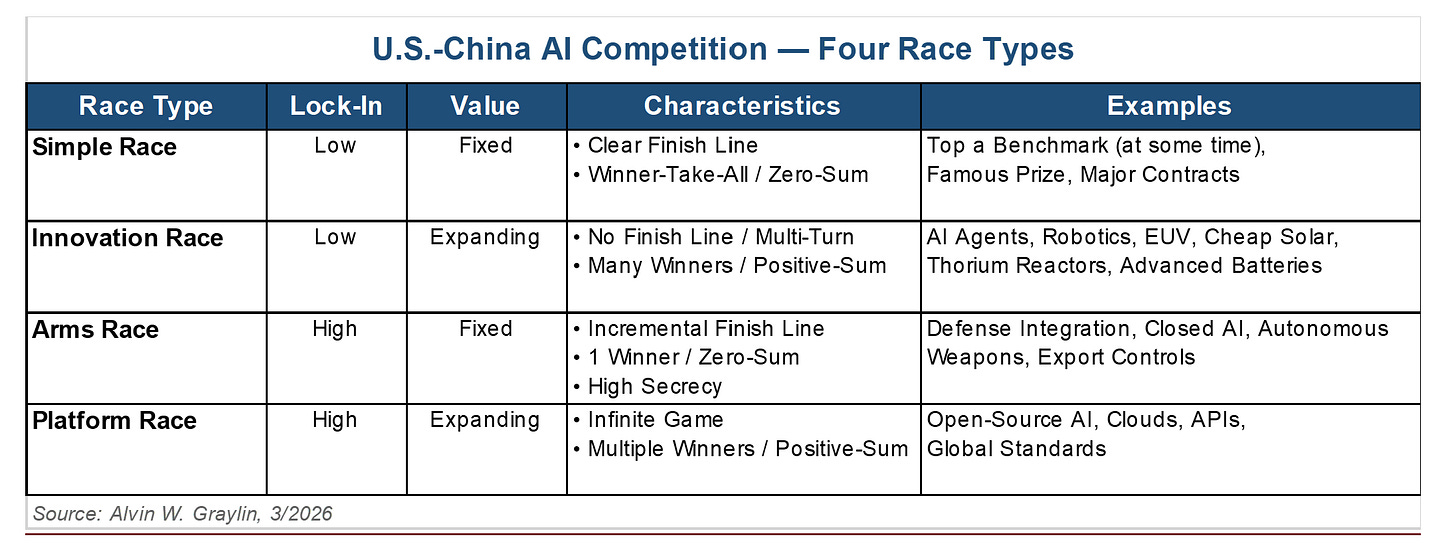

Consider the four types of AI competition. In a simple race (first to a benchmark), there’s a clear finish line and a single winner, but in practice, benchmark leads flip monthly and confer no durable advantage. In an arms race, secrecy and denial dominate, but the AI technology stack is too diffuse and fast-moving to contain. In an innovation race, the game is multi-turn with many winners—think robotics, agentic AI, and clean energy integration. In a platform race, it’s an infinite game where ecosystem adoption determines outcomes—think open-source AI, cloud APIs, global developer communities.

The United States is investing heavily in an arms race and simple race dynamics. China, increasingly, is competing in the innovation and platform quadrants (which offer more long-term value). The results speak for themselves: China leads in 66 of 74 critical technologies tracked by the Australian Strategic Policy Institute, dominates open-source AI model offerings, accounts for 54% of global industrial robot installations, produces about half of the world’s AI researchers, and builds more new electricity capacity annually than the rest of the world combined. These are the foundations of AI deployment at scale, and chip denial alone can’t offset them.

There are claims that most Chinese AI advances are due to stealing technology or distilling models from the United States. Given that now more than 40% of the world’s AI papers are published by researchers in China, and about half of the world’s AI researchers are born and educated in China, it’s hard to justify this claim. For some background on the practice of distilling models, it’s commonly recognized that most/all labs practice this internally and on each other (even domestically) to optimize model accuracy and efficiency. Since the frontier U.S. models are closed-source, Chinese labs are more easily tracked if and when they do it. Whereas most Chinese models are open-source, so the models can be run on the users’ local machines or hosted by each country’s domestic cloud services, thus Chinese labs cannot track their models’ detailed usage. Recently Cursor got into PR issues when it claimed to have trained its own advanced AI coding model (Composor 2), when it turned out that they just fine-tuned the Chinese Kimi K2.5 model. Additionally, Chinese labs usually publish their new technical findings as detailed papers with each major release of models, thus the need for U.S. labs to distill for learning breakthroughs from new Chinese models are reduced.

Rethinking the Strategic Framework

Washington’s strategic framework implicitly models the U.S.–China AI dynamic as a Prisoner’s Dilemma, a game where defection (competing aggressively, withholding cooperation) is the dominant strategy. But this framing has two fatal flaws.

First, the Prisoner’s Dilemma assumes a one-shot game with no communication. We live in a multi-turn world where actions are observable, reputations accumulate, and in such a case, the mathematically optimal strategy is tit-for-tat, starting with cooperation. We can see China’s actions and words. They can see ours. We are not in separate interrogation rooms.

Second, and more fundamentally, we are playing the wrong game entirely. The better model is the Stag Hunt. In this classic game, two hunters enter a forest and must decide: cooperate to catch a stag that feeds both for a month, or hunt hares separately that provide a week’s sustenance each. The stag requires both hunters working together. If one goes for the stag while the other chases hares, the stag hunter goes home empty-handed.

The United States is currently going after the stag, the projected artificial superintelligence (ASI) that promises permanent dominance. China, meanwhile, hunts the hares: practical AI diffusion across manufacturing, healthcare, education, and sovereign AI platforms for the developing world. The Stag Hunt has two Nash equilibria: both cooperate (stag) or both hunt alone (hares). But the worst outcome is precisely where the United States finds itself now: pursuing the stag alone while the other player collects reliable, real-world gains from hares. The more accurate and nuanced game theory representation of the world today is likely closer to a multi-turn Stag Hunt for the commercial market portion and a multi-turn Prisoner’s Dilemma for the national security portion. In both cases, increasing communication and finding common ground would create a better outcome for both sides. A more effective approach is to adopt a multi-turn Stag Hunt strategy where we first prioritize the hare equilibrium (diffusion focus) to address immediate risks and garner AI’s economic benefits, then later jointly pursue the long-term stag equilibrium in collaboration with China when we have a better sense of how to manage the shared safety risks of a truly transformative AI (ASI). Given the clear interdependence of both sides across multiple dimensions, this would deliver the optimal outcome, without the excessive capex costs or potential societal and safety risks of our current path.

The consequences of this mismatch are not theoretical. U.S. tech firms have committed over $800 billion annually in AI capex for 2025–2027, while job openings in the United States have declined sharply since 2022 even as the S&P 500 soared, a divergence that recent Stanford research attributes to AI automation of early-career positions in software development and customer service. World Bank data shows the U.S. workforce faces over 60% AI exposure, the highest globally, compared to about 40% in China. We are building the most powerful automation technology in history while having no plan for the workers it will displace.

The loudest proponents of the DSA narrative are AI labs and their investors who have strong commercial interests in lighter regulation and increased government funding. The refrain from Silicon Valley labs that “we can’t be regulated because if we slow down, China won’t” is not based on facts. China’s AI industry is one of the most heavily regulated in the world and their infrastructure buildout is just a fraction of the size of the United States. Putting in some sensible guardrails and regulations for AI development and deployment around safety, privacy, and security would not slow down the United States, but rather help ensure a less chaotic diffusion into broader society. In fact, China is slowing down their own AI labs but restricting their ability to buy Nvidia GPUs. Even after U.S. export control restrictions allowed for H200 GPUs to be sold to China, the Chinese government has required approvals for their companies to buy them. Recent remarks from Howard Lutnick suggest that to date, zero H200 GPUs have been sold to China.

The Military Dimension: From Benchmarks to Operational Readiness

Proponents (government and industry) of the current strategy often invoke national security: “If China gets AGI first, they’ll weaponize it against us or would prevent us from winning.” This is a legitimate security concern worthy of serious attention. However, the military advantage argument warrants closer examination. What the military needs is not the latest frontier model. It needs models that are fit to task, certified, tested, and integrated into operational systems. Here, strategic advantage depends not just on the invention of frontier models but on the institutional capacity to operationalize them, integrating them into doctrine, training personnel, and adapting operational concepts to leverage their capabilities.

The U.S. military’s vendor and model certification process can take over 12 months for national security level accreditation (DoD IL6). Recent reports suggest that the recent Iran military operations were planned using the 20-month-old Claude 3.5 Sonnet model, not because newer models didn’t exist but because the certification pipeline couldn’t keep pace, in addition to the need to remove standard safety guardrails from the base consumer model. Meanwhile, Chinese government agencies have far fewer testing and evaluation (T&E) delays, and their AI models already go through review by the government before public release. The gap between invention and deployment matters more than the gap between benchmarks.

In military settings, the tangible advantage may lie less in who trains the most capable model than in who can certify, integrate, and deploy usable systems fastest. On that metric, the current U.S. system is a structural disadvantage. But even if we achieve ASI first and can somehow expedite the certification process, unless we are willing to deploy it untested in a pre-emptive all-out strike against China, the temporal advantage could evaporate in a matter of weeks or months. It’s unclear that this result would justify the trillions in spend. And even if such an attack succeeds in taking down China’s infrastructure, the inevitable response will be swift and devastating for both sides. It’s the exact reason why we have not seen the use of nuclear weapons in real conflict for over 80 years.

In recent weeks, the new Anthropic Mythos model has attracted a lot of attention for its cybersecurity prowess, and some point to this as an example that the United States is “winning” and the current strategy is correct. Unlike large amounts of fissile materials, securing software and know-how is almost impossible. There are already reports that the Mythos model is now accessible to unauthorized personnel, not to mention the recent unintended leak of the full source code of Anthropics’ Claude Code solution. Recent new benchmarks are also showing that the OpenAI GPT 5.5 model is actually as performant in cybersecurity capabilities as Mythos and that model is publicly available to all users, thus showing that any perceived cyber advantages are highly temporary. We need to accept that any software-based advantage is temporary and adjust our strategy to the reality that DSA may just be a mirage. In fact, the bigger and more certain threat is the misuse of these advanced models by bad actors. Hackers and terrorists would not hesitate to make use of these models for nefarious purposes, whereas state actors still fear reprisals and the risk of escalation. This is a shared risk for both the United States and China, and a place where we can find common ground for cooperation.

What We’re Not Seeing: A Competitive Ecosystem, Not a Monolith

Washington tends to view Chinese AI as a state-directed monolith. The reality is a fiercely competitive commercial ecosystem where Chinese labs compete with each other (just to survive) far more than they coordinate to conspire against the United States. ByteDance’s Doubao is a closed-source consumer product fighting for domestic market share. Z.AI generates over 60% of its revenue from enterprise on-premise deployments. MiniMax earns roughly 70% of revenue from international API sales. Moonshot AI’s Kimi competes directly with all of them in consumer chatbots and AI agent inference. As an example, neither the Chinese nor U.S. government had any significant idea about DeepSeek’s innovations prior to its rise to prominence in January 2025.

The open-source strategy that has made Chinese models globally ubiquitous is an emergent outcome of commercial competition, not a directed national strategy. Alibaba open-sourced Qwen to drive cloud adoption; DeepSeek open-sourced to attract research talent; MiniMax did so to build developer ecosystems. Even within these companies, there are significant internal tensions. Alibaba is actively debating open-source diffusion versus revenue protection, and Tencent faces similar strategic discussions on agent-focused models. Framing this diverse, commercially motivated ecosystem as a unified strategic threat leads to policy responses that are simultaneously too broad (restricting all Chinese AI) and too narrow (focused on chips while ignoring the deployment gap). That said, while these firms operate with substantial commercial independence, they remain embedded in a system where the Chinese state retains the ability to shape or direct their alignment when it perceives critical national interests to be at stake. The recent push by the Chinese government for some of its leading labs to optimize models for local chip offerings before release is an example of this.

Additionally, it’s worth mentioning that U.S. export control policies also exist around advanced tools and equipment targeting chip and semiconductor manufacturing players (e.g., Huawei and SMIC) with it’s core aim to constrain compute supply to Chinese AI labs. These players have a clear association with the state, and such restrictions have shown near-term effect in limiting their capabilities, but has also clearly accelerated the development of domestic alternatives, albeit still one to two generations behind the most advanced Western offerings. As the two ecosystems bifurcate, there will be increasingly less dependency on the United States and less leverage for future negotiations.

A Different Path Forward

None of this means we should be complacent about security. Genuine threats exist: AI-enabled CBRNE (Cyber, Bio, Radioactive, Nuclear, Explosive) risks, autonomous weapons proliferation, and the misuse of AI by bad actors. But these threats are best addressed through targeted, narrowly scoped controls and shared intelligence, not through a blanket technology-denial strategy that was designed for a world that no longer exists.

The “small yard, high fence” doctrine, originally conceived as a targeted national security tool, has expanded into a comprehensive technology approach that now touches chips, lithography, EDA software, HBM, chemicals, and advanced AI models, as discussed in a recent CCA article. This expansion has paradoxically accelerated China’s drive toward full supply chain independence, fueled innovations born of constraint (like DeepSeek’s efficiency breakthroughs), and alienated allied semiconductor industry firms who see shrinking addressable markets. That said, export controls serve legitimate national security purposes, and policymakers may be operating with intelligence assessments and threat evaluations not available in the public domain.

Adjusting course does not require abandoning competition. It requires reframing it. Three shifts would immediately improve America’s strategic position:

First, make efforts to narrow export controls to genuine military and CBRNE threats, not all advanced technology. The current regime tries to prevent China from developing any frontier capability, which is both unenforceable and counterproductive. In fact, given Chinese labs’ propensity for open-sourcing their models, most advances they produce will naturally flow into U.S. models rapidly, turning them into subsidiary development arms for U.S. players. It must still be noted that in the unlikely event of direct conflict, having some export controls may still buy time for domestic adaptation, alliance coordination, and military hardening even if such controls cannot achieve the wished-for permanent dominance.

Second, shift from a Cold War arms-race mental model to a Space Race innovation model. The Space Race generated GPS, Teflon, the internet’s precursor, bringing massive spillover benefits for the whole economy. An AI innovation race focused on healthcare, climate, education, and productivity could do the same, with far more participants winning. It would also encourage the U.S. labs to release comparable level open-source models to China so our tech stack can compete on a level playing field for global diffusion. Additionally enacting some reasonable safety and socially responsible regulations on U.S. labs (as China has already done) will lessen the growing societal fear over AI that’s been brewing domestically which is certain to hinder the nation’s ability to deploy this technology effectively into the economy and reduce our global competitiveness.

Third, open bilateral and multilateral channels on AI safety. The Stag Hunt only works when hunters can communicate before entering the forest. Both China’s “Global AI Governance Action Plan” and America’s “AI Action Plan” were released in July 2025, and neither mentions the other country. We cannot manage the multifaceted risks of the most powerful technology in human history while refusing to talk to the other nation building it. There’s clear common ground on AI safety; both countries want to avoid catastrophic risks from runaway AI; and both have a shared interest in preventing AI-enabled bad actor proliferation.

Conclusion: There Is No Finish Line, but Many Medals to Win

A race is not a race if the competitors are headed to different destinations. The United States is sprinting toward AGI and ASI as if crossing that threshold confers permanent dominance. China is building industrial AI infrastructure that will shape how much of the world deploys this technology for decades. Both paths have value, and neither inherently zero-sum, if proper coordination occurs.

The biggest risks we face from AI are misuse by bad actors, mass displacement of workers, concentration of economic power, and escalation of military conflict. These are shared risks that require shared solutions. Every dollar spent on denial should be matched by greater investment in national preparedness for AI diffusion and adaptation. Every diplomatic channel closed over chips is a safety conversation not happening. A deeper discussion on the underlying technology issues and a detailed framework for addressing both economic and geopolitical issues around the AI race can be found in this Stanford paper, Beyond Rivalry.

It is time to lift the veil of assumptions that have constrained our thinking. The playing field is not the one we were told exists. But the real one offers enormous opportunities if we have the clarity to see it and the courage to adjust course. There is no finish line in AI, but along the way, there will be many medals awarded. We should make sure America is competing for the ones that actually matter.

Utterly fascinating and propounding a thesis that is cogently explored. It offers a way out of the cul de sac of current thinking.

The part of China’s AI ecosystem that U.S. strategists most easily underread is the diffusion power of Chinese open-source models. Once model capability spreads at low cost, it stops being just a lab achievement and starts becoming a form of industrial organization.

The most valuable part of Alvin Graylin’s essay is that it challenges the way U.S. strategy circles misunderstand how AI actually produces power. AI is not a nuclear weapon. It is not a single breakthrough that can be monopolized for decades after one decisive discovery. It is closer to a combination of electricity, the internet, cloud computing, and industrial automation. Its real power does not come only from the highest frontier model. It comes from who can embed that capability into the economy and industrial system at lower cost, larger scale, and faster speed.

When a model’s API cost can fall to a fraction, or even one-tenth, of comparable U.S. models, and when open-source models can be quickly adapted by enterprises, local developers, overseas startups, and government platforms, the center of AI competition shifts. It moves from who can build the strongest model to who can make model capability cheaper, easier, and more widely usable.

This is also why the essay’s critique of U.S. export controls matters. Washington originally hoped to slow China’s AI catch-up by restricting chips, EDA tools, semiconductor equipment, HBM, advanced packaging, and high-end models. But the problem is that the more the scope of restrictions expands, the more it pushes China toward a clearer independent technology-stack path. That is the central irony of America’s AI strategy.

This is also the core argument of my earlier essay (https://leonliao.substack.com/p/ai-chip-controls-may-just-build-chinas?r=731anr&utm_medium=ios)on U.S. technology blockades against China: America’s restrictions will not simply stop China’s rise. They will accelerate China’s effort to build its own independent technology stack.

And this is exactly what I have been trying to capture in my series, The Great Partition of Global AI. (https://leonliao.substack.com/p/the-great-partition-of-global-aifrom?r=731anr&utm_medium=ios)The global AI ecosystem is being partitioned. This does not necessarily mean a complete rupture, but it does mean a gradual layering of models, chips, cloud infrastructure, developer ecosystems, data centers, power systems, application scenarios, and national-security rules.

The United States may still dominate the highest-end frontier AI narrative. China, meanwhile, may build another diffusion system around low-cost open-source models, industrial deployment, Global South markets, and a domestic technology stack.

If Washington continues to misread the AI race as a sprint toward AGI, it may underestimate the long-term power of this alternative path.